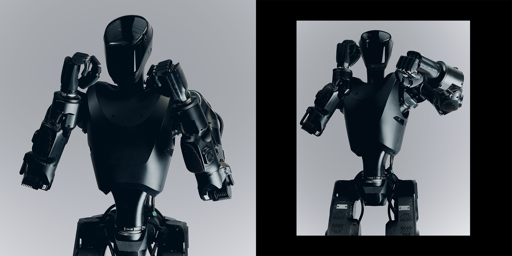

The Phantom MK-1 looks the part of an AI soldier. Encased in jet black steel with a tinted glass visor, it conjures a visceral dread far beyond what may be evoked by your typical humanoid robot. “We think there’s a moral imperative to put these robots into war instead of soldiers,” says Mike LeBlanc, a 14-year Marine Corps veteran, who is a co-founder of Foundation, the company that makes Phantom. LeBlanc believes that giant armies of humanoid robots will eventually nullify each side’s tactical advantage in any conflict much like nuclear deterrents—exponentially decreasing escalation risks. The counterargument is, however, chilling: that humanoid soldiers lower political and ethical barriers to initiating conflict, blur responsibility for any abuses, and further dehumanize warfare. As companies like Foundation race to embody humanoids with lethal functionality, a parallel legal tussle is raging between AI-focused defense companies and international bodies seeking to codify what level of human control is appropriate in war. Full story here. submitted by /u/timemagazine

Originally posted by u/timemagazine on r/ArtificialInteligence