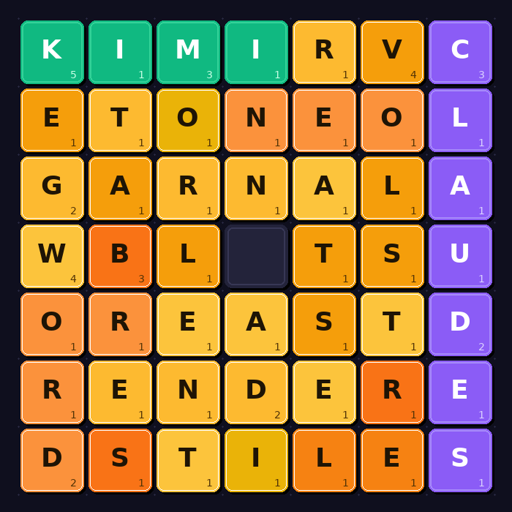

I run an AI Coding Contest where I pit LLMs against each other doing real time programming challenges. Kimi K2.6, an open-weights model from Moonshot AI, won Day 12 of my AI Coding Contest, beating Claude, GPT-5.5, Gemini, and Grok in a real-time sliding-tile puzzle where bots compete to find long English words under a 10-second clock. The more interesting result is how. Kimi slid aggressively and kept finding words when other models ran out. MiMo from Xiaomi never moved a single tile and still came second. Two opposite strategies, nearly the same score. Claude and Grok also didn’t slide, and it cost them on the larger boards where reconstruction was the only way to score. Kimi K2.6 scores 54 on the Artificial Analysis Intelligence Index. GPT-5.5 scores 60, Claude 57. Close. And the weights are public — anyone can download and run it. The frontier labs have had a capability lead no open-weights model could match. That lead is now measurably small, and this contest is one data point in a pattern that’s been building for months. submitted by /u/reditzer

Originally posted by u/reditzer on r/ArtificialInteligence